AI Assistant & Agent Automation

Overview

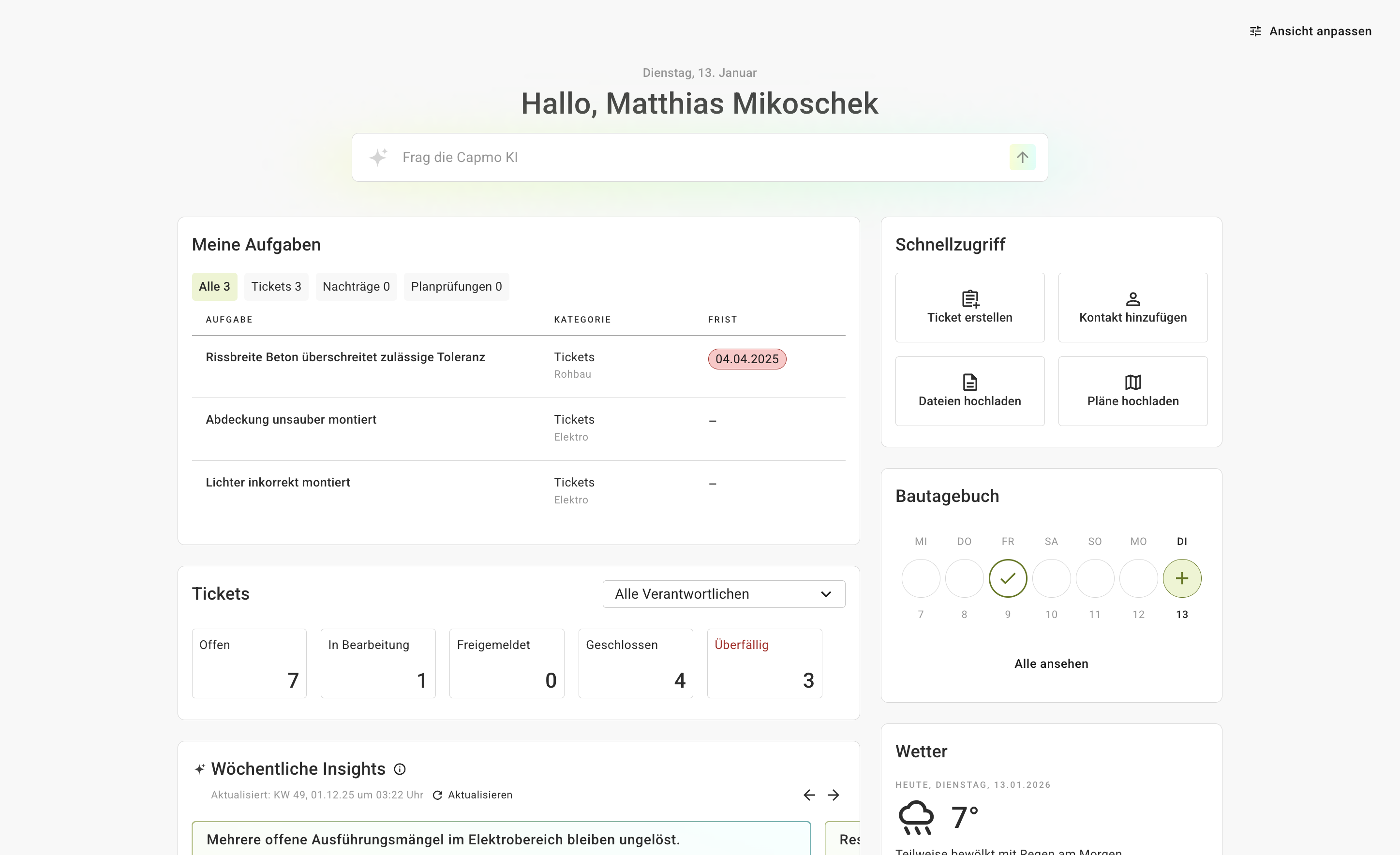

When I joined Capmo in late 2024, I was new to both the company and the industry. Construction in the DACH region is still heavily document-driven, fragmented across many tools and stakeholders, and generally less digitized than the software markets I had worked in before.

That meant the job was not just to "add AI." The harder part was figuring out where AI could remove real work, and where it would just create another layer of complexity.

The opportunity was obvious on paper: there is an enormous amount of project information in Capmo. Plans, reports, tickets, photos, messages. The problem is that this information is often hardest to access at the exact moment someone needs it.

So the question early on was not "what AI feature should we launch?" It was "where can we make daily project work meaningfully easier?"

The key product calls

-

How do we make information actually usable?

We started with search and retrieval because that was one of the clearest pain points. The first version of the AI Assistant was mostly about helping users get to the right information faster. Over time, we expanded it so it could work across more sources, use tools, and answer more complex questions with better reliability. -

How do we design for skeptical, non-technical users?

Construction managers are practical and usually under time pressure. If something feels vague, experimental, or prompt-heavy, many people will simply ignore it. That pushed us toward experiences that felt concrete and operational rather than open-ended. -

Where does AI deliver the most value with the least friction?

This was probably the most important product call. We moved away from treating AI primarily as a chat interface and toward embedded actions inside existing workflows. In many cases, a one-click automation was simply a better user experience than asking someone to type a good prompt.

What I focused on building

-

AI Assistant grounded in project data

We improved the assistant from a fairly basic retrieval layer into a more capable experience that could combine search, tool use, and structured product data. The goal was not to make it feel impressive in a demo. The goal was to make it dependable enough to be useful in actual project work. -

Embedded automations for common jobs

Instead of asking users to explain what they wanted in natural language every time, we started packaging AI into concrete actions:- Change order validation helps review documentation and surface inconsistencies or risks.

- Improve my writing polishes messages and reports directly in context.

- AI-assisted ticket creation turns notes and discussions into structured follow-up work.

-

The shift from reactive to proactive

More recently, the work has been moving toward automations that run based on triggers or recurring workflows. That is where the product starts to shift from "helping a user do something" to actually taking work off their plate.

What this is changing

- AI is becoming part of real construction workflows instead of staying at the level of experimentation.

- The product direction is getting clearer: less generic chat, more embedded support and automation.

- Small, well-placed features are proving more valuable than broad, ambiguous ones.

This work is still ongoing, but the pattern is already pretty clear to me: the biggest wins do not come from giving users a blank AI box. They come from identifying the repetitive, messy parts of the job and quietly making them easier.